I want to find out which algorithm is the best that can be used for downsizing a raster picture. With best I mean the one that gives the nicest-looking results.

I know of bicubic, but is there something better yet? For example, I've heard from some people that Adobe Lightroom has some kind of proprietary algorithm which produces better results than standard bicubic that I was using. Unfortunately I would like to use this algorithm myself in my software, so Adobe's carefully guarded trade secrets won't do.Added:I checked out Paint.NET and to my surprise it seems that Super Sampling is better than bicubic when downsizing a picture.

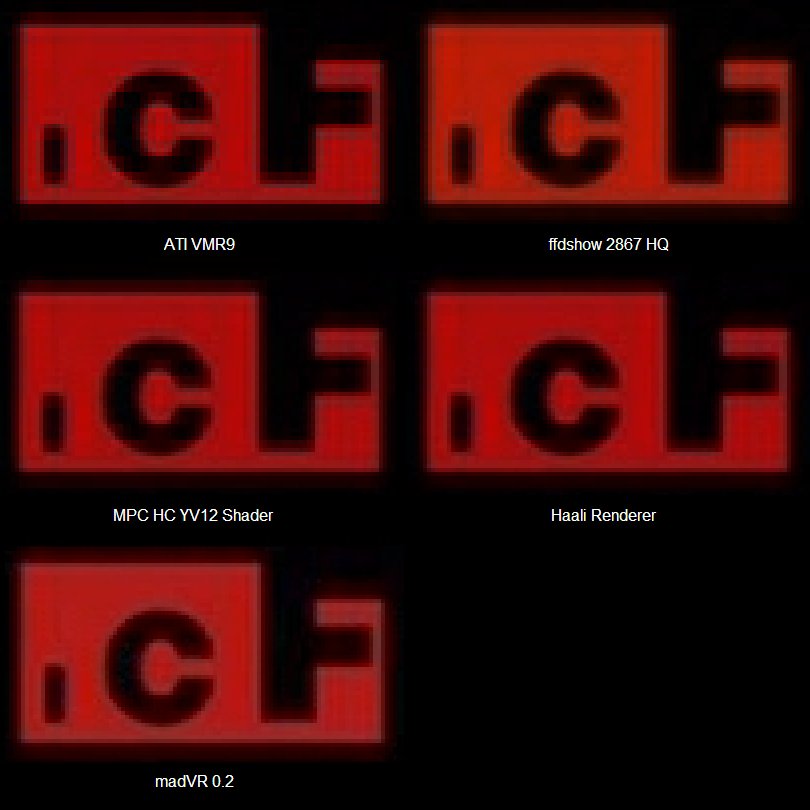

Image downscaling: Bicubic100 is more than sufficient (Catmull-Rom is a fancy name of Bicubic 50). Oh, thank you so much for this settings I really appreciate it, as for Image doublling do I put all four alternatives on 64 neurons? On my low power systems I skip MadVR completely and use EVR custom + D3D Fullscreen in MPC-HC with Bicubic.

That makes me wonder if interpolation algorithms are the way to go at all.It also reminded me of an algorithm I had 'invented' myself, but never implemented. I suppose it also has a name (as something this trivial cannot be the idea of me alone), but I couldn't find it among the popular ones. Super Sampling was the closest one.The idea is this - for every pixel in target picture, calculate where it would be in the source picture.

It would probably overlay one or more other pixels. It would then be possible to calculate the areas and colors of these pixels. Then, to get the color of the target pixel, one would simply calculate the average of these colors, adding their areas as 'weights'.

So, if a target pixel would cover 1/3 of a yellow source pixel, and 1/4 of a green source pixel, I'd get (1/3.yellow + 1/4.green)/(1/3+1/4).This would naturally be computationally intensive, but it should be as close to the ideal as possible, no?Is there a name for this algorithm? Unfortunately, I cannot find a link to the original survey, but as Hollywood cinematographers moved from film to digital images, this question came up a lot, so someone (maybe SMPTE, maybe the ASC) gathered a bunch of professional cinematographers and showed them footage that had been rescaled using a bunch of different algorithms.

The results were that for these pros looking at huge motion pictures, the consensus was that Mitchell (also known as a high-quality Catmull-Rom) is the best for scaling up and sinc is the best for scaling down. But sinc is a theoretical filter that goes off to infinity and thus cannot be completely implemented, so I don't know what they actually meant by 'sinc'. It probably refers to a truncated version of sinc. Lanczos is one of several practical variants of sinc that tries to improve on just truncating it and is probably the best default choice for scaling down still images. But as usual, it depends on the image and what you want: shrinking a line drawing to preserve lines is, for example, a case where you might prefer an emphasis on preserving edges that would be unwelcome when shrinking a photo of flowers.There is a good example of the results of various algorithms at.The folks at fxguide put together on scaling algorithms (along with a lot of other stuff about compositing and other image processing) which is worth taking a look at.

They also include test images that may be useful in doing your own tests.Now ImageMagick has an if you really want to get into it.It is kind of ironic that there is more controversy about scaling down an image, which is theoretically something that can be done perfectly since you are only throwing away information, than there is about scaling up, where you are trying to add information that doesn't exist. But start with Lanczos. I'd like to point out that the sinc filter is implementable without truncation on signals with finite extent. If we assume that outside of the region we know, all the samples are zero, the extra terms in the Whittaker–Shannon interpolation formula disappear and we get a finite sum.

That is a valid interpretation of the original data, even though it is likely incorrect (the world isn't black outside of our field of view). This filter still couldn't be used on live audio and video because it isn't causal, but for use in images that doesn't matter.–Jul 21 '15 at 17:34. I'm late to the party, but here's my take on this. There is only one proper way to scale an image down, and it's a combination of two methods. 1) scale down by x2, keep scaling down until the next scale down would be smaller than the target size. At each scaling every new pixel = average of 4 old pixels, so this is the maximum amount of information kept.

2) from that last scaled-down-by-2 step, scale down to the target size using BILINEAR interpolation. This is important as bilinear doesn't cause any ringing at all. 3) (a bonus) do the scaling in linear space (degamma-scale down-regamma).–Jul 6 '16 at 18:08.

(Bi-)linear and (bi-)cubic resampling are not just ugly but horribly incorrect when downscaling by a factor smaller than 1/2. They will result in very bad aliasing akin to what you'd get if you downscampled by a factor of 1/2 then used nearest-neighbor downsampling.Personally I would recommend (area-)averaging samples for most downsampling tasks. It's very simple and fast and near-optimal. Gaussian resampling (with radius chosen proportional to the reciprocal of the factor, e.g. Radius 5 for downsampling by 1/5) may give better results with a bit more computational overhead, and it's more mathematically sound.One possible reason to use gaussian resampling is that, unlike most other algorithms, it works correctly (does not introduce artifacts/aliasing) for both upsampling and downsampling, as long as you choose a radius appropriate to the resampling factor. Otherwise to support both directions you need two separate algorithms - area averaging for downsampling (which would degrade to nearest-neighbor for upsampling), and something like (bi-)cubic for upsampling (which would degrade to nearest-neighbor for downsampling).

One way of seeing this nice property of gaussian resampling mathematically is that gaussian with very large radius approximates area-averaging, and gaussian with very small radius approximates (bi-)linear interpolation. Having investigated best looking downscaling methods I also found area method to produce the best results. The one situation where the result is not satisfying is when downscaling an image by a small factor. In that particular case area method generally blurs the image, but nearest neighbor can preform surprisingly well. The funny thing about using gaussian downscaling is that it's more or less equivalent of first blurring the image and then downscaling it using nearest neighbor.–Jun 30 '15 at 11:20.